Open ChatGPT right now and type: “Who is a good orthopaedic surgeon in [your city]?” Give it ten seconds. It will name someone. It might describe their specialty, their clinic, maybe even the kinds of conditions they treat. And it will do this with complete confidence – as if it has done careful research on your behalf.

Now ask yourself: would your name appear in that answer?

For most doctors, the honest answer is no. And the reason is not that they are less qualified than whoever did appear. The reason is that doctors in ChatGPT recommendations share a specific set of signals that AI systems use to identify credible, trustworthy medical sources. Doctors who have those signals get recommended. Doctors who don’t get skipped entirely – regardless of how many years they have been practising or how good their clinical outcomes are.

This article breaks down exactly how that selection works, why the gap between visible and invisible doctors keeps widening, and what you can do about it.

The Problem Most Doctors Don’t Know They Have

Here is what makes this situation uncomfortable: most doctors who are invisible in ChatGPT recommendations have no idea they are invisible. They are busy running a practice. They have a website. They might even rank reasonably well on Google for a few local searches. So the assumption is that their online presence is fine.

But ChatGPT does not work like Google. It does not serve you a list of links and let the patient choose. It forms an opinion – and then it delivers that opinion as a recommendation. If your digital presence has not given ChatGPT enough quality information to form a confident opinion about you, it simply recommends someone else instead.

That someone else might have fewer qualifications. They might have shorter experience. But they will have something you don’t: a dense, consistent, well-structured online presence that AI systems can read, verify, and trust.

This is the core of the problem. AI search is not about being the best doctor. It is about being the most legible doctor to an AI system.

How ChatGPT Actually Decides Which Doctors to Recommend

ChatGPT is a large language model. It was trained on a vast dataset – websites, medical publications, directories, news, research papers, review platforms, and more. During that training process, it built an understanding of which doctors and healthcare practices exist, what they specialise in, and how credible they are based on what the wider web says about them.

When a patient then asks ChatGPT to recommend a specialist, the model generates a response based on that internal knowledge. With browsing enabled – which is the default in most current ChatGPT plans – it also performs a live search to supplement that knowledge with current information.

The selection process comes down to three things:

- How much consistent, accurate information exists about this doctor across multiple platforms and sources

- How credible those sources are – a mention on a hospital website carries far more weight than a mention in a general directory

- How clearly the doctor’s content is structured so the AI can extract and summarise it into a direct answer

Doctors who score well across all three consistently appear in ChatGPT recommendations. Doctors who are weak on even two of these three are functionally invisible.

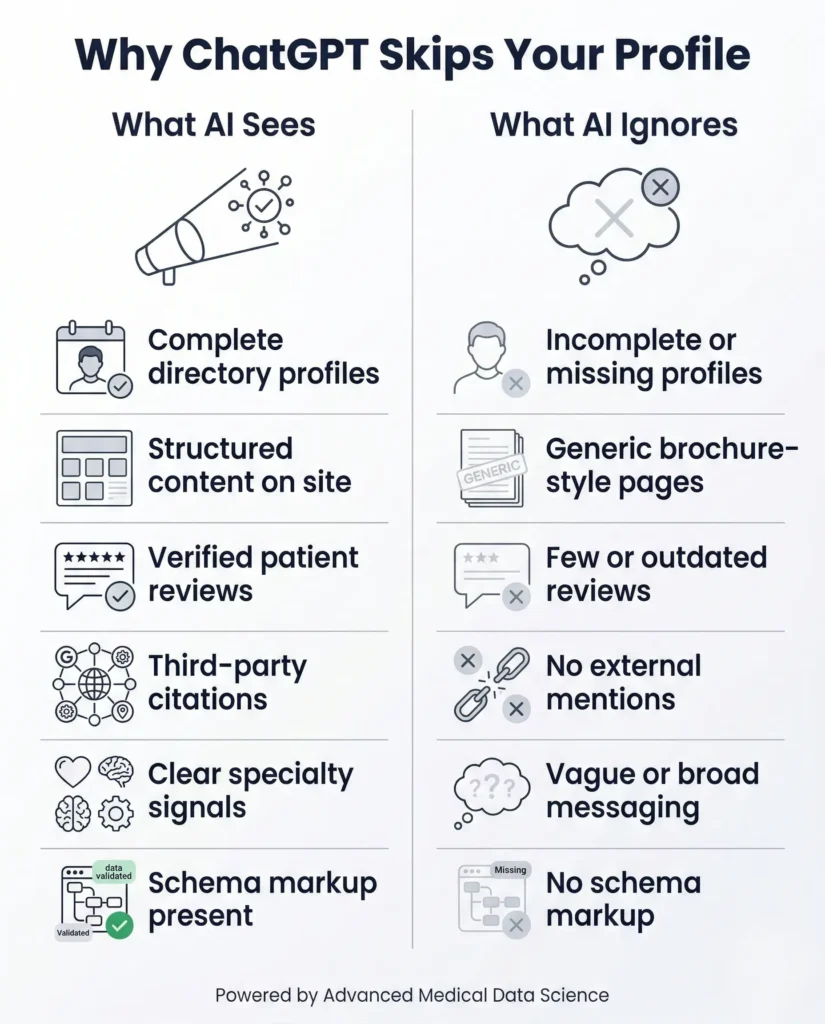

6 Signals That Separate Doctors in ChatGPT Recommendations From Those Who Never Appear

After working with medical practices on AI search visibility, a clear pattern emerges. The doctors who consistently appear in ChatGPT recommendations share these six signals. The ones who don’t are missing most of them.

1. Complete and Consistent Directory Profiles

ChatGPT aggregates data from across the web. When it encounters a doctor’s name, it looks for confirmation of who that person is from multiple independent sources. If your name, specialty, clinic name, address, and credentials appear consistently on Google Business Profile, Healthgrades, Practo, Doximity, Zocdoc, and your hospital’s staff page – that consistency builds what AI researchers call entity confidence.

Entity confidence is simple: the more sources that agree on who you are and what you do, the more confidently AI can recommend you. Inconsistent information – different spellings of your name, different clinic addresses, mismatched credentials – creates confusion that reduces AI confidence and recommendation frequency.

Most doctors have claimed maybe one or two of these profiles. The ones appearing in ChatGPT recommendations have claimed and fully completed eight or more, all with identical information.

2. A Website That Answers Real Patient Questions

This is where the biggest gap sits. Most doctor websites are essentially digital brochures. They list services, display credentials, and include a contact form. They do not actually answer the questions patients bring to ChatGPT.

Patients ask ChatGPT things like:

- When do I actually need surgery for a slipped disc?

- What’s the difference between a neurologist and a neurosurgeon?

- How long does IVF treatment typically take?

- What should I expect at my first cardiology appointment?

When ChatGPT searches for answers to these questions, it looks for content that directly addresses them. If your website has a page titled “Our Services” with three bullet points listing what you do, that page is useless to AI. If your website has an article titled “When Is Spine Surgery Actually Necessary – And When Is It Not?” with a detailed, clear, patient-friendly explanation, that content can be extracted, summarised, and cited in a ChatGPT response that mentions your name.

The question-and-answer format is not just good writing practice. It is how doctors get into ChatGPT recommendations.

3. Verified Patient Reviews Across Multiple Platforms

Patient reviews are one of the most powerful trust signals AI systems use when evaluating doctors. Not because AI reads reviews the way a human does, but because the volume, recency, and spread of reviews across platforms tells AI something important: this is an active, trusted, practising doctor with a real patient base.

A doctor with 12 reviews from 2021, all on Google, sends a very weak signal. A doctor with 90 reviews spread across Google, Healthgrades, and Practo, with new reviews coming in every month, sends a strong one.

The content of reviews matters too. A review that says “very professional and caring doctor” gives AI almost nothing to work with. A review that says “Dr. Sharma correctly diagnosed my autoimmune condition after two years of misdiagnosis and explained the treatment plan in detail” contains specific clinical language that AI can associate with a particular specialty and level of competence.

You cannot write reviews for patients – but you can create a post-appointment process that makes leaving a detailed review easy and natural.

4. Third-Party Citations From Credible Sources

This is the signal most doctors have the least of, and it is one of the most important.

When a credible third party mentions your name – a hospital website, a medical journal, a health publication like WebMD or Healthline, a university medical department, a news article about a health topic – that creates what is essentially a vote of confidence in the AI’s dataset. The more of these citations exist, the stronger the signal that you are a recognised authority in your field.

Think of it this way. If ChatGPT encounters your name only on your own website, it has only your word that you are credible. If it encounters your name on your hospital’s staff page, in a quote in a health article, listed on your state medical council’s directory, and cited in a health news piece, it has four independent sources confirming your authority. That is the difference between appearing in recommendations and not.

Building these citations takes time, but the starting points are concrete:

- Make sure you are fully listed on your hospital or clinic’s official website with your specialty and credentials

- Update your listing on your medical association’s directory

- Offer yourself as a quoted expert to local health journalists

- Contribute a guest article or expert column to a regional health publication

- If you have published research, ensure it is properly indexed and attributed

5. Clear Specialty Signals Across All Platforms

AI systems are not good at making assumptions. If your profiles and website content are vague about what you actually specialise in, AI will not assign you to the right category when recommending doctors for specific conditions.

A cardiologist whose website and profiles clearly state “interventional cardiologist specialising in coronary artery disease and heart failure management” will appear in far more relevant ChatGPT searches than a cardiologist whose website says “comprehensive cardiology services for all heart conditions.”

Specificity is not limiting. It is targeting. The more precisely you define your specialty – including the specific conditions you treat, the procedures you perform, and the patient populations you serve – the more accurately AI can match you to patient queries that fit exactly what you do.

This is why a solo specialist with a tightly defined niche often appears in ChatGPT recommendations more frequently than a large multi-specialty hospital with vague, department-level descriptions.

6. Schema Markup on Your Website

Schema markup is structured code that sits in your website’s backend and tells AI crawlers exactly what type of entity your page represents. Without it, AI has to guess. With it, you tell the AI directly: this person is a medical doctor, their specialty is orthopaedic surgery, their clinic is located at this address, they have these qualifications, and they treat these conditions.

The most useful schema types for doctors include:

- Person schema – your name, credentials, specialty, affiliation

- MedicalOrganization schema – your clinic or practice details

- FAQPage schema – structured Q&A content blocks

- Review schema – verified patient testimonial markup

- MedicalCondition schema – for condition-specific content pages

Schema is one of the few technical changes that can produce a noticeable improvement in AI search visibility relatively quickly. If your current website has no schema markup – which is the case for the majority of doctor websites – fixing this is one of the highest-impact things you can do right now.

Why Experience and Qualifications Are Not Enough

This is the part that frustrates a lot of doctors when they first hear it. You have twenty years of practice. You trained at a top institution. You have performed thousands of procedures with excellent outcomes. And yet a newer colleague with a well-optimised digital presence is the one appearing in ChatGPT recommendations for searches in your own specialty.

That frustration is completely understandable. But here is the reality: AI systems cannot directly observe clinical skill. They cannot assess your outcomes. They cannot evaluate your bedside manner or your diagnostic accuracy. The only way they can assess your credibility is by analysing what the public digital record says about you.

If that record is thin, inconsistent, or poorly structured, AI will default to whoever’s record is more complete – even if that person has fewer years of experience.

This is not a flaw in AI search. It is how all information-based systems work. A medical journal does not rank papers based on how good the doctor is as a person. It ranks them based on the quality and clarity of the research as presented. AI search works the same way. Your digital presence is the paper. Make it good enough to be cited.

The Real Cost of Being Invisible in ChatGPT Recommendations

A year ago, not appearing in AI search results was a minor inconvenience. Today, it is a significant patient acquisition problem. In two years, it will likely be the difference between a full appointment book and a struggling practice.

Here is why the stakes are rising so fast. Patient search behaviour is shifting at a rate most healthcare providers have not yet registered. A growing proportion of patients – particularly younger patients and those making decisions about elective or specialist care – are starting their doctor search not with Google, but with an AI assistant. They ask ChatGPT or Gemini to explain their symptoms, then ask it to recommend a specialist, then book directly with whoever was named.

If you are not in that recommendation, you are not even in consideration. The patient does not then switch to Google and dig further. They book with whoever AI suggested. That is how fast the decision cycle has become.

According to data published by Search Engine Land, ChatGPT is now processing over one billion web searches per month. Healthcare queries make up a significant and growing share of those searches. The practices investing in AI search visibility now are building a patient acquisition channel that compounds over time. The ones waiting are ceding ground to competitors they do not even know are overtaking them.

How Doctors in ChatGPT Recommendations Built Their Visibility – A Practical Path

There is no single action that fixes AI invisibility. It is a combination of consistent signals built over time. But there is a clear order of priority that makes sense for most practices starting from scratch.

Start with your entity foundation. Claim and fully complete every relevant directory profile. This is the fastest, lowest-cost action and it immediately starts feeding consistent data into AI training sources and live search results. Prioritise Google Business Profile, Healthgrades, Doximity, Practo, and your hospital’s staff page if applicable.

Then fix your website content. Identify the ten questions your patients most commonly ask before booking an appointment. Write a proper, in-depth article for each one. Each article should be at least 1,000 words, written in plain clear language, and structured with question-based headings. This content becomes the raw material AI extracts when constructing a recommendation.

Add schema markup. Work with your web developer to implement the relevant schema types for your practice. This is a one-time technical task that significantly improves how AI classifies and recalls your information.

Build your review volume. Create a simple, consistent process for asking patients to leave reviews immediately after a positive appointment. A text message with a direct link to your Google review page, sent within 24 hours, converts at a much higher rate than a verbal ask. Target a minimum of five new reviews per month across at least two platforms.

Then start working on citations. One new credible external mention of your name per month adds up quickly. A hospital staff listing update, a quote in a local health article, an updated medical association directory profile. Each one adds to the body of external evidence that AI uses to confirm your authority.

For a deeper breakdown of how to build AI search signals across your entire online presence, the guide on AI search optimisation for doctors covers the full framework step by step.

How to Test Whether You Are Appearing in ChatGPT Recommendations Right Now

This takes about three minutes and is worth doing before anything else.

Open ChatGPT. Make sure the browsing tool is enabled. Then ask it the following questions, substituting your actual specialty and city:

- “Who are the best [your specialty] doctors in [your city]?”

- “Can you recommend a [your specialty] for [condition you commonly treat] in [your city]?”

- “I’m looking for a [your specialty] clinic in [your area] – who would you suggest?”

Note everything that comes back. Who appears? What information does ChatGPT provide about them? Which platforms does it reference? Then run the same test on Gemini and Perplexity.

What you find is your current baseline. If your name does not appear at all, you have a visibility gap to close. If a competitor appears and you want to understand why, their profile is essentially your research brief – look at every platform they are listed on, every piece of content they have published, every external site that mentions them.

That comparison will show you exactly where your gaps are and what to prioritise first.

If you want to see a real example of how AI search visibility works for medical practices in practice, the LLM SEO guide for clinics walks through the process with concrete clinic examples.

The Window Is Still Open – But It Is Narrowing

Right now, the majority of doctors have not yet seriously addressed their visibility in AI search. That is actually an opportunity. The authority signals that drive doctors in ChatGPT recommendations take time to build – but the practices that start building them now will hold positions that competitors will find increasingly hard to displace.

AI search is not a future trend to plan for. It is the current reality of how a growing number of patients find and choose their healthcare providers. The gap between doctors who appear in ChatGPT recommendations and those who don’t is already producing real differences in patient enquiries and appointment bookings.

The six signals covered in this article – consistent directory profiles, question-based website content, verified reviews across multiple platforms, third-party citations, clear specialty signals, and schema markup – are your starting points. None of them require a huge budget. All of them require consistent, informed effort.

Start with the one that represents your biggest gap. Build from there. Test your visibility every month. The doctors who do this work now are the ones patients will find when they ask ChatGPT who to see next year.